A World Adrift

On AI, agency, and the future of meaning

I do not know how to code. Coding is a task that always seemed shelved away for the summer, when my schedule would free up and I could dedicate myself to hours of Python tutorials on YouTube. Then, summer would come and go, and my coding knowledge would still begin and end at the “Hour of Code” events we would have in elementary school. This was, I think, due to a very simple problem that reaches out far beyond just coding: what I wanted to do could never overcome how long it would take me to learn the skills to do it. Every fun website or cool piece of software lay on top of what would be not just one summer, but many summers of Python practice in my room. So, the ideas stayed ideas—things I would do when I had the money to pay a team of coders and the time to see it through until the end.

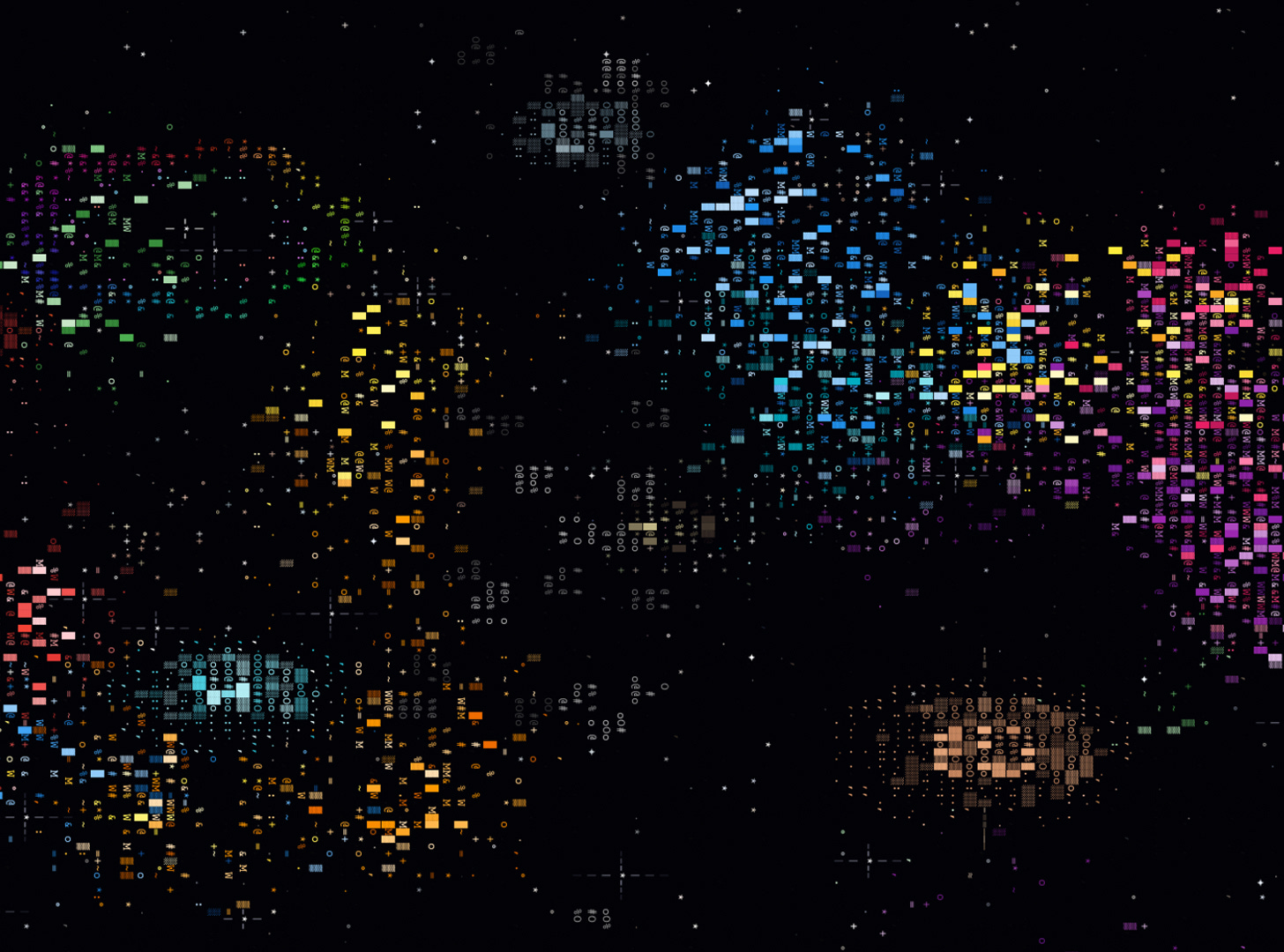

This is where I want to tell you about the first time I used a large language model—colloquially known as AI—to code something. My writer brain wants so badly to point to this exact date at this specific time when I sat down at my MacBook, and by pure happenstance saw an ad for ChatGPT—that I spent all night making those spunky websites and cool apps I daydreamed about as a kid. The thing is, I can’t remember the first time I used AI to code me something. I don’t even recall the first time I used it at all. It seems as if Claude1 and ChatGPT entered my life and rooted themselves in my MacBook hotbar with no intention to leave.

I say this because, whether you view this technology as the end of human usefulness or the beginning of a world free of cancers and carnage, our role as the general public is that of an audience. We are the ones who watch Sam Altman muse about the billions he will make before the apocalypse he will bring about. Even the apologetic and ever-concerned Dario Amodei still can’t seem to stop himself from quoting whatever multiple of white-collar jobs he has decided will be destroyed. The lack of agency that any of us have over how and where this technology is integrated, and by extension the jobs it will create and many more it will destroy, makes for an understandable anxiety. It’s a unique type of anxiety too—the sense that every time Claude makes your 9-5 that much easier, it is, at the same time, filing your unemployment benefits in six, twelve, or eighteen months.

This is a feeling that I have been too late and too detached from understanding, and it feels only right to lead with it in this piece. The vitriol so many feel towards this technology is the opposite of the Mennonite-scared-of-the-future-simpleton that those who work in and around this technology want to believe. It is, in fact, the men in suits promising a society where money does not matter and who, curiously, still have stashed vast fortunes who want to destroy the social fabric of the last two centuries. Which, of course, makes the reactions of anger and fear that have erupted in data center discussions at city halls across the country so understandable. If the general public has no way to stop the train from reaching its destination, then all we have left is to throw ourselves onto the tracks in the hopes we can slow it down, even just a bit.

Yet, even as I write about the big storm cloud over our nation’s collective conscience, I still cannot bring myself to hate this technology. There has never been a technology before that leaves me with so much awe—that empowers me with so much agency. I find myself nearly every day doing something new and creative that, in the before times, would require a degree just to start. It would be a lie to say I do not love it.

I feel like Veruca Salt from Charlie and the Chocolate Factory—a spoiled brat whose every wish is answered and request granted, but with the gnawing worry that I will pay for this in some way. I think that the Charlie and the Chocolate Factory analogy is a useful one here, because it highlights just how much this technology is a multiplier for the best and worst qualities of ourselves. Our best qualities, like craving connection with another who cares about us, can just as easily be turned into our worst—ending our days by telling Claude what we did and who we saw. It was only Charlie2 who, bound by his own restraint, was able to enjoy the responsibility his peers could not.

I, for instance, never have used and never will use AI to write or re-write anything I post here. It is simply incomprehensible, as anyone who creates anything will tell you, to imagine automating any part of the creative process. Even if I thought Claude or ChatGPT could write a piece exactly in my voice—something so identical nobody but me would know—I would not do it. That’s because writing is what I love to do. Writing gives me meaning in a way nothing else does, and surrendering that so I could post more often or at greater length is antithetical to why I do this. I will, though, ask Claude before I post if it has any suggestions on a piece. If I find those suggestions to be useful—as I often do—I will go back in and fix them myself.

I still don’t know how to code. I don’t think I ever will. But I do know that as long as I am alive, I will write. Whether that is in the Oval Office or the unemployment office, writing is a pillar that allows me to not worry about the rest. I believe we all have the capability and responsibility to find what writing is to me, now more than ever. With this, the future that AI brings seems to me to lose its scariest component: our place in it. There is no such thing as surrender and no such feeling as uselessness if you are grounded in the creation of something uniquely you. To be anchored by meaning in a world adrift is, I think, all we can ask for.

A coincidence I will choose to accept as more than luck

This is excellent writing. You have a graceful style. Reminds me of Nathan Heller, who is on the staff of The New Yorker.