On Law School, Realism, and Uncertainty

Notes on a changing world

I write a lot about realism—about being able to separate what we wish the world was from what it actually is. Realism is a passive line of thinking that ironically requires quite a bit of action to commit to. That’s because, in part, a realist’s way of thinking can be at times a bit drab. Life is sometimes very cruel for very little reason, and the untinted glasses of a realist offer very little solace in this fact. It’s why I try to make it extra clear in my foreign policy pieces that, regardless of my policy recommendations, I still do hope that the oppressed of this world may prevail over their oppressors. There is simply no other choice for those who want to make this world a better place than to hold that innate kindness and consideration.

I say all of this because we are in one of those times where the truth, in all its disheveled honesty, is more helpful than an agreeable fib. I’m talking today specifically about artificial intelligence, and what it means for young people like myself. I think we are doing a tremendous disservice to people my age by ignoring where this technology is going, even if where it is going is scary and unpredictable. From the very top of universities down to the individual student, there is both a lack of understanding of what AI can do today, how it can be used in new and creative ways, and where and how it will be applied tomorrow. This has led to a sorry middle ground divided between AI skeptics who refuse to use this technology entirely out of fear or denial, and oblivious super-users who have surrendered their sentience to ChatGPT.

I’m somewhere in the middle. I use Anthropic’s Claude and Google’s Gemini daily at this point, and it has made me more productive than I thought possible. I’m able to experiment in new domains, like coding, in ways that would have taken me an entire degree to get close to. I also try to curate my feed on X to be centered around those working in this field at the frontier labs. Put another way, I know enough about these tools to know that whatever life I had planned to lead in 2023 is no longer a viable pathway. I do believe there will be new types of jobs, and that we will avoid the worst-case scenario of mass unemployment leading to dramatic civil unrest, but people my age should be thinking twice about if the future they want is a future they can have.

This all leads me to the title of this piece, and a thesis which I won’t pretend to hedge against: Law school is a dangerous bet in 2026. It’s a bet that willingly ignores the capabilities of artificial intelligence today, tomorrow, and in ten years. It puts not only a heavy financial burden on young people—a burden excused by the lucrative earnings of lawyers past—but takes bright and talented individuals away from new opportunities that can make them more money and give them more meaning. I’m going to detail and flesh out all these points below, but I once again want to emphasize I take no pleasure in putting down others’ dreams. With that said, let’s begin.

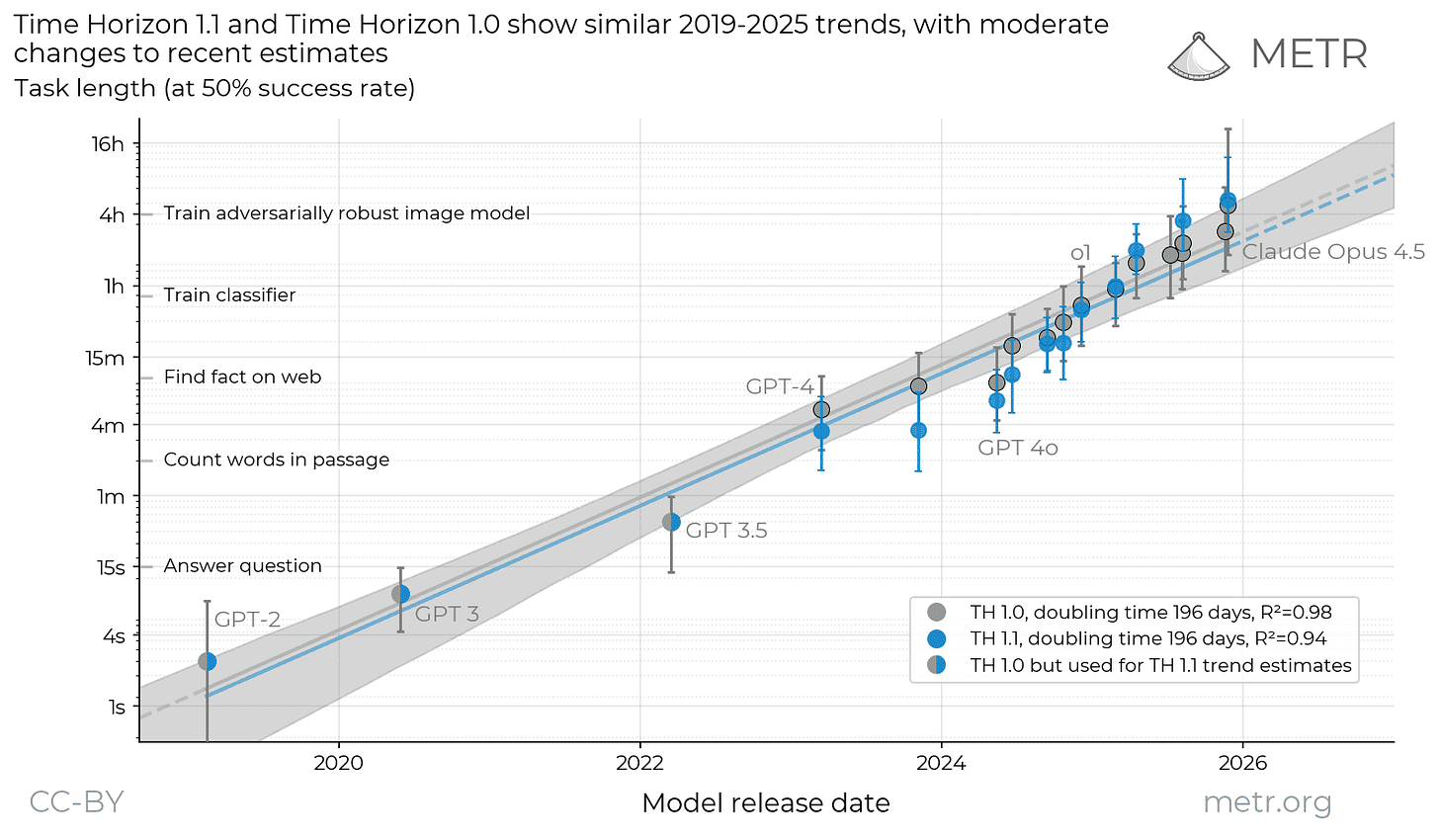

METR, which tracks the ability of LLM models to perform tasks autonomously without oversight, helps demonstrate the point I’m making. GPT 3, released by OpenAI in 2021, was the first time most in the general public had heard about AI in the way we know it today. GPT 3 could operate by itself for less than fifteen seconds, barely being able to answer questions coherently and accurately. Now, less than half a decade later, Opus 4.5 can run for over four hours on tasks before the chance of failure is greater than of success at a given task.

This chart also highlights just how rapidly these advancements have compounded onto each other. Whereas it took more than a year for GPT 3.5 to barely reach a minute before failure, it took less than nine months for Opus 4.5 to achieve an additional 180 minutes on OpenAI’s o1. You don’t need to be a technical mastermind to play this out into a future where models can run on their own for an entire work day, completing tasks previously given to junior-level employees.

This is already affecting software engineering fields and computer science jobs alike. There simply isn’t a reason to hire, train, and employ a graduate fresh out of college if a more senior-level employee already has access to a team of junior coders via Claude Code. As technological diffusion reaches more fields, like law, the ladders of economic mobility that have existed for decades will gradually, and then suddenly, be destroyed. These are jobs like paralegals: low-paying, task-heavy jobs which require research and synthesis skills that LLMs of today can already do.

This doesn’t mean that junior-level employees are done for, but it does by definition contract the amount of hiring firms are doing. Whereas a firm might hire a dozen junior associates to synthesize case law or sift through thousands of pages in discovery, said firm could hire just one or two associates tasked with verifying the output of an LLM. It can be difficult to determine exactly how and where these cuts in employment can hit across firms. That’s why it’s particularly important to largely take prognostication out of this and work with the facts we have at hand and let you decide what you think will happen.

Right now, Claude Opus 4.5 can search a custom database—whether raw text, PDFs, spreadsheets, or a mix—that a user provides and synthesize the findings. This can be done within an enterprise, like a large law firm, to protect client confidentiality and ensure accuracy to the source material. Claude can then take this synthesis and turn it into a Powerpoint, a PDF, a .Docx, or a .xlsx without much trouble. Claude can also operate inside a browser and operate autonomously on your own Google tabs. It can send emails, keep tabs on Slack, and go back and forth with your colleagues on your behalf. Claude can also, just recently, be plugged directly into Excel and work solely with the information you provide it.

This can all be done, with the debut of Claude Cowork, without the explicit instruction or handholding of a human counterpart. You simply tell Claude what you need, where you need it from, and in what format you need it. The best way to discern the quality of its response is to test it for yourself. I’d encourage you to sign up for a Pro plan to see how Claude does performing your own tasks at work. My bet is that Claude will do better to on-par with yourself with some minor variance depending on what you ask.

I don’t need to throw numbers or wax vaguely about the future for you to then understand that the contraction I’m talking about looks less like predicting Doomsday and more like predicting that the sun will rise tomorrow morning. Even if junior employment stays the same at large firms by virtue of increased productivity, smaller firms will suffer. There is only so much pie to be sliced up before someone walks away with less than they started with. This is important on a macro scale for our policymakers in transitioning these workers, but it’s absolutely critical for young adults making the biggest financial and time commitment yet of their lives.

I’m a subscriber to the idea that we will find new ways and new jobs for those displaced by this technology. Societies are surprisingly sturdy things, and a return to some type of neo-feudalism that some have predicted is unhelpful and unlikely in my view. Still, we should give some preliminary thought as to what these new types of jobs will look like and ask for. Will domain expertise in contract law be useful if we have systems more intelligent than any human with access to every brief on any case? Probably not, and that’s okay! Jobs change, and skills with them.

As a generally risk-friendly person, I understand the strategic value of taking on debt now with the belief that the prestige or skills you gain will make it a worthwhile bet. It’s a bet I’m personally riding my future on as I transfer from the University of Kansas to a more prestigious, and expensive, school on the East Coast. We live in a world of Balenciagas and Burger Kings, and pretending otherwise is a waste of time and energy. Normally, this would be enough for me to justify law school for those select few elite schools even as I advised against it more generally—and I’ll be honest that this advice may still hold.

That only holds, I think, if you treat the time spent in law school as a binary and the opportunities outside of it as non-existent. I think it’s a profound mistake to, in this time of massive social and economic upheaval, spend any more time than you have to credentialing yourself. This may seem a bit backwards to how the status quo operates. Indeed, law school applicants historically rise as economic conditions falter—as has happened over the last year. For more typical economic turbulence, such a decision makes sense. Needless to say, this is anything but typical.

The technological lag that AI adoption and implementation will take is going to allow for an opportunity unseen since the internet for young and talented people who grow up using the technology. Like university administrators too old to find ways to use AI naturally, the companies of today are too saddled by the practices of yesterday to truly use AI in novel ways. Every minute spent in law school is a minute you are not spending in the workforce, where your ability to use AI to improve the quantity and quality of your work can and should put you ahead of the pack.

Foregoing law school also saves you the economic burden that pursuing a JD inevitably forces one into. At both private and public law schools, the rate of tuition adjusted for inflation has risen exponentially. The average student now leaves law school with over two hundred thousand dollars spent on tuition and living expenses—with the vast majority needing to take on loans to achieve this. Your ability to operate in a risk-accepting way is profoundly hindered by this financial reality, making you less able to operate in a risk-accepting mindset.

For knowledge workers like would-be lawyers, this is both a challenge and an opportunity. The former is largely a mental one in accepting the changing times and moving with, not against, them as they continue to shift. A lot of this will become apparent as others across the white-collar space use AI in new companies and organizations—providing a path for others to follow behind. You don’t have to be Steve Jobs to try your hand at using these tools of knowledge for your own enrichment. You just have to be willing to take what you can from the moment that has arrived.

Even at its most cynical level, where I am both wrong in the opportunity and advice, law school will still be there. You can only gain by taking a few years to ingratiate yourself into a workplace before junior employment shrinks or is entirely cut off. You’d be well off to invest that time and money back into a different, but still just as interesting and rewarding, profession in a similar field.

In writing about AI, you are bound to be more vague than you would like. We are projecting into the future and, while grounded by evidence and projecting with caution and care, are bound to be wrong somewhere along the line. Maybe these autonomous systems get caught along the way, or perhaps the scaling laws that have led to linear advancements in model quality diminish or stop altogether. A realist can and should be able to accept uncertainty for what it is while still operating on some forward projection.

No one person is going to have the specific answers as to what someone who planned to become a lawyer should now do. Even if AI is radically different in terms of disruption and potential, the path to success is still as difficult to define as it always has been. There will be some who go to law school and use AI to become wealthy from their work and wise in the courtroom. There will be others who forego law school only to attend regardless in five or ten years. Outliers and exceptions are always tantalizing but rarely useful in guiding young people through any time of crisis. Thinking about your ability to operate exceptionally in whatever the moment brings, though, is.

Hi Charlie,

About 30 years ago I picked up my first AI textbook. We were taught how to use processor-power to emulate AI.

I had a project that implemented a human-machine interface .... for example, pulling data records out of a large database. The programming progressed much faster than could be expected. I did understand at the time that these AI concepts could eventually be used for good, or evil. I was working with nuclear weapons, where a programming mistake would be catastrophic.

These days I LOVE AI. I'm no longer technically savvy. With age, I have developed cognitive issues and can no longer keep up with the underlying technology, AI always gives me the correct answers (or answers I think are correct).

We have to let the world change ... for better or for worse. Change will happen tomorrow, and into infinity.

I won't be here to see how it ends.